Google Keyword Position Checker API: Developer’s Guide

Google Keyword Position Checker API: Developer’s Guide

You can tell when rank tracking has gone sideways. Someone has a spreadsheet open, an incognito window on a second monitor, and a half-trusted process for checking the same query three different ways because the result “looked off” the first time.

That method falls apart fast when you manage paid search seriously. A handful of keywords is annoying. A live account with multiple locations, device splits, and search term churn turns it into busywork that nobody should still be doing by hand. If you want dependable rank data for campaign decisions, you need a google keyword position checker api and a workflow built around it.

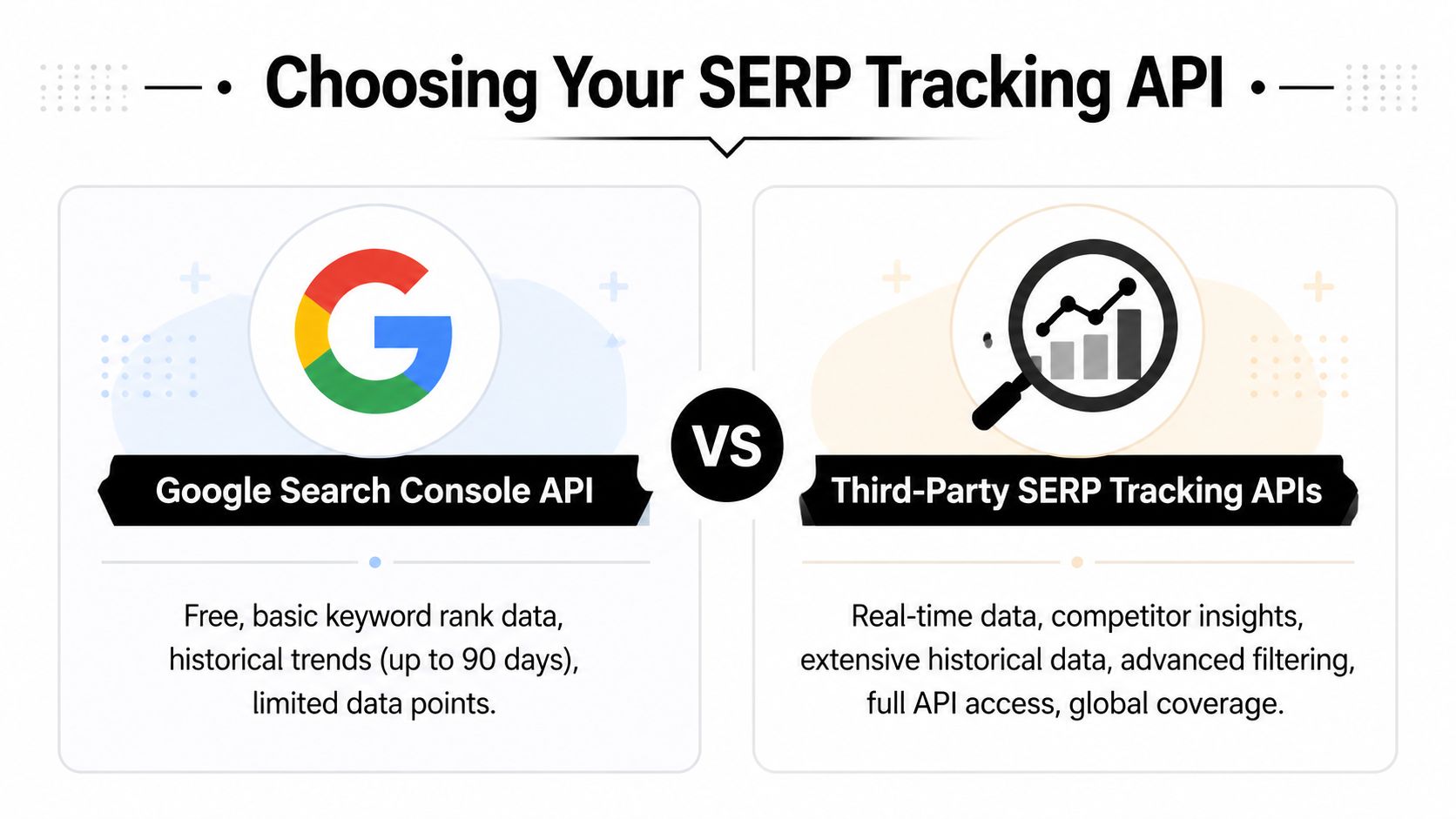

The tricky part is that “Google API” can mean two very different things. One path gives you your own site’s aggregated search performance data. The other gives you direct SERP position data that you can use for competitive checks, geo testing, and PPC automation. They solve different problems, and mixing them up leads to bad implementation choices.

Why Manual Rank Checking Is Obsolete

Manual checks feel free until you count the time, inconsistency, and bad decisions they create. A PPC manager pulls a search terms report, spots a few expensive queries, opens Google, searches them one by one, and tries to guess whether the brand ranks “well enough” organically. By the time that review is done, the data is already stale and the process still isn't repeatable.

The old pain point isn't just speed. It's trust. Google tailors results by location and context, so two people on the same team can check the same keyword and come back with different impressions. That's one reason so many teams moved away from browser checks and toward API-driven collection.

Why hand checks stopped working

Google's own earlier API options never really solved rank tracking for professional use. Google's official Custom Search API, launched around 2009 to 2010, was a poor fit for SEO rank tracking because it offered only 100 free requests per day and required custom search engine setups that returned non-standard results. That gap helped push the market toward third-party providers, and those tools now support over 80% of enterprise SEO workflows for automated rank monitoring, according to the verified summary based on the Google AJAX Search API discussion.

That shift matters for PPC, not just SEO. If you're deciding whether to keep bidding hard on a query, reduce waste, or push a term into a negative workflow, you need data you can pull consistently and compare over time. A repeatable API job gives you that. Manual checks don't.

Practical rule: If a rank-checking process can't run on schedule without a person opening Google, it isn't a reliable process.

A good starting point is to stop treating rank checks as a research task and start treating them like a data pipeline. That means defining the query list, location, device, and domain-matching logic in code. If you're still patching together exports and screenshots, it's worth reviewing alternatives to spreadsheets and ad hoc browser work in this guide to manual keyword tracking alternatives.

One more point. Rank isn't the only quality check you need. When content teams want a fast sanity check on how pages line up with AI-shaped search visibility, a tool like this free ai seo checker can be useful alongside your rank stack. It doesn't replace a SERP API, but it helps surface visibility issues that a plain position number can miss.

The real dividing line

There are two practical choices:

- Use Google Search Console API when you want first-party data about your own verified property.

- Use a third-party SERP API when you need direct ranking positions, competitor visibility, location targeting, device targeting, or richer SERP context.

That distinction clears up most implementation mistakes.

Accessing Position Data with the GSC API

A common setup looks like this: the SEO team wants rank data, the PPC team wants to know which queries deserve budget, and someone asks for a “Google keyword position checker API.” For your own site, the first API to wire up is usually the Google Search Console API. It gives you first-party search performance data for verified properties, including queries, pages, clicks, impressions, CTR, and average position through the Search Analytics endpoint in the Google Search Console API documentation.

That matters for Keywordme-style workflows because GSC can answer a very specific question well: where do we already have organic visibility that should influence paid decisions? It will not replace a live SERP tracker, but it is a strong input for finding queries that may deserve lower bids, tighter match types, or new negative keyword rules. If your team still struggles with missing organic query detail in analytics, this guide to keyword not provided in analytics helps explain why GSC data ends up being so important.

What you need before you call it

The setup is simple if the property and permissions are already in place:

- Verify the site in Google Search Console.

- Enable the Search Console API in Google Cloud.

- Authenticate with OAuth or a service account setup that your environment supports.

- Call the

searchanalytics/queryendpoint with the dimensions and date range you plan to analyze.

For rank-related reporting, the useful dimensions are usually query, page, country, and device. In practice, I would start with fewer dimensions than you think you need. Pulling query and page first is often enough to validate the pipeline before adding segmentation that increases row count and handling complexity.

Example request shape

A basic request body looks like this:

{"startDate": "2025-05-01","endDate": "2025-05-07","dimensions": ["query", "page"],"rowLimit": 1000}And a typical response row looks like this:

{"keys": ["best espresso beans", "https://example.com/espresso"],"clicks": 42,"impressions": 310,"ctr": 0.135,"position": 7.4}The position field is the part people focus on first, but the most significant value comes from the combination of fields. A query with solid impressions, weak CTR, and an average position near page one can be more useful to a PPC team than a vanity ranking report. That pattern often points to terms where ad copy, landing page alignment, or negative keyword filtering can improve paid efficiency.

What average position means in production

GSC does not return a live rank for a single search result page at a specific moment. It returns an average position across impressions in the selected date range. If a page appeared in different positions for different users, devices, or countries, the API rolls that into one metric.

That makes GSC good for trend analysis and portfolio decisions.

It also means you should avoid using it for exact SERP diagnostics. If a stakeholder asks, “What rank are we in Chicago on mobile right now?”, GSC is the wrong source for that request.

For a tool like Keywordme, the practical use case is joining GSC query data to paid search data on a schedule. Daily or weekly pulls work well. Once stored in your warehouse, you can flag queries where organic visibility is improving while CPC stays high, then route those terms into bid reviews, match type changes, or automated negative keyword checks. That is where GSC earns its place in a PPC workflow. Not as a perfect rank monitor, but as a reliable first-party signal tied directly to your own domain.

Key Limitations of the GSC API

The Search Console API is useful, but it has hard limits that matter the moment rank data starts driving bidding or negative keyword decisions.

The biggest issue is scope. GSC only tells you about your verified properties. It does not show competitor positions, and it does not scrape the actual result page. If your workflow depends on seeing who owns the top spots for a query, GSC can't help.

Where GSC falls short in practice

Three problems show up quickly in production use:

- Property-only visibility: You can inspect your own domain, not the full competitive field.

- Average position instead of exact placement: Good for trends. Weak for precise SERP diagnostics.

- No real-time result page context: You don't get a direct view of ads, local packs, featured snippets, or live ranking layouts from the API.

Those gaps matter because paid search decisions often depend on context, not just a summary metric. A query can look “acceptable” in GSC while still being dominated by ads, local results, or stronger competitors in the live SERP.

Why that creates PPC blind spots

Suppose a search term is expensive in Google Ads and your team wants to know whether to keep pushing bids. A GSC average position might say you're visible. But if the actual result page is crowded and your strongest competitor owns the practical click path, average position won't surface that.

This problem gets worse when teams try to reconstruct the missing picture from partial data. That's the same basic reporting gap behind a lot of the frustration in modern organic analysis, especially when keyword-level visibility is obscured or aggregated. If that sounds familiar, this breakdown of keyword not provided analytics hits the broader issue from another angle.

If you're optimizing against today's auction pressure, yesterday's aggregate organic metric is not enough.

The limitation that matters most

For developers, the decisive limitation is this. GSC isn't a SERP retrieval API. It's a performance reporting API.

That distinction changes architecture. If your use case is:

- logging sitewide organic trends,

- mapping queries to pages,

- reviewing historical movement on your own domain,

then GSC is solid.

If your use case is:

- checking a query by city or country,

- comparing mobile and desktop layouts,

- seeing whether competitors outrank you,

- detecting snippets, ads, or local pack placements,

you need a different source.

That's why serious rank tracking stacks usually treat GSC as one input, not the only input. It belongs in reporting and attribution. It does not replace direct SERP collection.

Comparing Third-Party SERP Tracking APIs

A common failure case looks like this. A query appears stable in reporting, PPC spend is rising, and nobody can explain why branded click-through rate is slipping in one market but not another. The missing piece is usually the live result page. For a tool like Keywordme, that matters because rank data is only useful if it can feed actions such as negative keyword rules, competitor-aware bid adjustments, and landing page checks.

Third-party SERP APIs solve a different problem than Google's reporting interfaces. They return the page you asked for, with request-level controls for market, device, and query context, so you can inspect who ranked, what SERP features showed up, and where your domain landed. That is the input a PPC developer can work with.

The useful comparison is not "free vs paid." It is "aggregated property data vs retrievable search results."

What you’re paying for

Paid SERP APIs earn their keep when rank checks need to match media buying conditions. If a campaign is segmented by country, city, language, and device, rank collection has to use those same dimensions or the data will be noisy.

Common advantages include:

- Request-level geo targeting: Country, city, or localized market checks that line up with campaign targeting.

- Device-specific results: Mobile and desktop often produce different competitors, packs, and ad pressure.

- SERP feature visibility: Organic listings, ads, featured snippets, local packs, shopping blocks, and other result types in one response.

- Cleaner parsing: Structured JSON is easier to map into domain matching, rank history, and alerting logic than raw HTML.

There is a cost for that convenience. You have per-request billing, provider quotas, and another dependency in your stack. In practice, those trade-offs are acceptable when the alternative is making bid or negative keyword decisions from incomplete rank context.

GSC API vs. Third-Party SERP APIs

| Feature | Google Search Console API | Third-Party SERP APIs |

|---|---|---|

| Primary data type | Your site's search performance data | Direct SERP result data |

| Property verification required | Yes | No |

| Competitor tracking | No | Yes, if you parse and compare domains |

| Location targeting | Limited reporting dimensions | Strong request-level control |

| Device targeting | Reporting by device | Request-level device selection |

| SERP feature visibility | Limited | Stronger visibility into result composition |

| Best use case | Historical performance for your own site | Exact rank checks and competitive analysis |

| Cost model | Free | Paid usage |

Which providers fit which jobs

Provider choice comes down to implementation details more than brand recognition. Some APIs are optimized for live SERP retrieval with clean schemas. Others are better for large SEO datasets, broad keyword research, or multi-engine coverage. Services such as SerpApi, DataForSEO, Scrapingdog, Apify, and similar platforms are usually evaluated on four practical criteria: parameter control, response structure, rate limits, and cost per useful result.

For Keywordme-style PPC workflows, schema quality matters more than feature lists on a pricing page. If the response makes it easy to identify your domain, competing domains, paid placements, and SERP features, it is easier to turn rank checks into automation. That is the difference between a dashboard metric and a workflow input.

A simple selection rule works well. Choose the provider whose API lets you reproduce the same market conditions your campaigns target, then test how much cleanup your parser needs before the data is usable in bidding and search term logic. If you are designing that handoff, this guide to integrating keyword data via API covers the architecture decisions that usually matter first. Teams without in-house engineering often bring in experienced python developers at this stage because parser quality, retry logic, and normalization work determine whether the feed is reliable enough for automation.

Implementing a Third-Party API with Python

A Python script is usually the fastest way to turn SERP data into something a PPC team can use. The first version does not need a framework. It needs to return consistent rank records for the exact market you bid in, then store them in a format your automation can read.

For a Keywordme-style workflow, the script should handle four jobs cleanly:

- accept a list of keywords,

- request rankings with the correct location and device settings,

- normalize result URLs before matching,

- save output in a durable store your PPC logic can query later.

That last point gets ignored too often. If rank checks only end up in a CSV for manual review, they stay an SEO report. If they land in a database with keyword, landing page, device, and timestamp attached, they become usable signals for bid rules, negative keyword suggestions, and ad group cleanup.

A simple Python pattern

Here's the structure I recommend first:

import osimport reimport requestsfrom urllib.parse import urlparseAPI_KEY = os.getenv("SEARCH_API_KEY")TARGET_DOMAIN = "example.com"keywords = ["best espresso beans","espresso grinder for home","single origin coffee subscription"]def normalize_domain(url):hostname = urlparse(url).netloc.lower()hostname = re.sub(r"^www\.", "", hostname)return hostnamedef get_position(keyword, location="United States", device="desktop"):endpoint = "https://www.searchapi.io/api/v1/search"params = {"api_key": API_KEY,"engine": "google_rank_tracking","q": keyword,"location": location,"device": device,"page": 1,"num": 100}response = requests.get(endpoint, params=params, timeout=30)response.raise_for_status()data = response.json()for row in data.get("organic_results", []):result_domain = normalize_domain(row.get("url", ""))if result_domain == TARGET_DOMAIN:return row.get("position")return Nonefor kw in keywords:pos = get_position(kw)print({"keyword": kw, "position": pos})This pattern works because it is predictable. The parser reads a stable JSON response, checks organic_results, and returns a position only when the target domain appears. That matters once the output starts feeding PPC decisions. A false match on the wrong subdomain or an unnormalized URL can send the wrong landing page into your bid logic.

The trade-off is coverage versus cost. A simple request loop is enough for a small keyword set or a proof of concept. Once you track by country, city, device, and landing page variant, the request count climbs fast. At that point, clean code matters less than job design, storage, and how you join the rank record back to campaign data.

Implementation details that save time later

A few implementation choices make the difference between a useful script and a maintenance problem:

- Store the API key in environment variables. Keep secrets out of source control and deployment logs.

- Normalize domains and, if needed, paths.

www, subdomains, trailing slashes, and tracking parameters create bad matches. - Return

Nonecleanly. Missing rank data is normal and should not break downstream jobs. - Persist raw response fields you may need later. URL, title, SERP feature type, and timestamp often become useful after the first reporting request.

- Separate collection from decision logic. Fetch rankings in one job. Apply PPC rules in another. That makes debugging much easier.

I also recommend storing the matched URL, not just the position. In paid search workflows, rank movement without landing page context is hard to act on. If Keywordme sees a term ranking organically in position 2 with the same page your ads send traffic to, that can support a lower bid or a different match-type strategy. If the ranking URL is a blog post while the ad points to a product page, the PPC action is different.

If your team needs help turning a quick script into maintainable application code, it's often worth pulling in experienced python developers instead of asking a PPC analyst to own production engineering.

For teams building that handoff into campaign automation, this guide to integrating keyword data via API covers the application layer decisions that usually matter once the script works.

One more useful reference before you productionize the script:

What to log besides position

Position alone is too thin for optimization work. Save the query context with every record:

- Keyword

- Location

- Device

- Matched URL

- Position

- Timestamp

That gives you a usable event stream instead of a vanity metric. You can compare rank changes by device, detect when ads are covering terms with weak organic visibility, and spot cases where strong organic coverage justifies negative keyword testing or tighter bid controls.

Managing API Quotas and Caching Results

A rank tracker usually gets expensive for one reason. It keeps asking the same question after nothing meaningful has changed.

That problem shows up fast in PPC workflows. If Keywordme is checking a query every hour, but the bidding logic only updates once a day, those extra calls do not improve decisions. They just burn API quota and inflate your cost per usable signal.

The fix starts with query value, not infrastructure. Assign a refresh policy based on how the keyword is used. Brand terms tied to aggressive impression share targets may deserve more frequent checks. Informational terms that only feed weekly negative keyword reviews usually do not. The best schedule is the one that matches the action your system will take after the result arrives.

Control request volume before it becomes a billing problem

Production systems need guardrails. A bulk import, a retry storm, or a bad cron schedule can waste a month's quota in a day if you let every worker hit the provider directly.

Use a few simple controls:

- Rate-aware batching: Send requests in controlled batches that stay inside provider limits.

- Backoff on transient failures: Retry timeouts and temporary errors with increasing delays.

- Queued jobs: Run rank collection in background workers instead of from user-facing requests.

- Priority rules: Check revenue-driving keywords first, and push low-value terms to slower schedules.

- Deduplication at the job level: If the same keyword, device, and location combination is already queued, merge it instead of creating another request.

That last point matters more than teams expect. Duplicate jobs often cost more than legitimate rank checks.

Cache the full query context

Caching cuts waste only if the cache key matches the request shape exactly. For rank data, that usually means at least keyword, country or location, language, device, and search depth. If you leave out device or locale, you'll return a valid result for the wrong SERP and make bad PPC decisions from it.

A practical cache key looks like this:

rank:best espresso beans:united_states:en:mobile:top100

TTL should follow use case. Short windows fit active monitoring on high-value terms. Longer windows fit reporting, budget pacing, and negative keyword review jobs. If your paid optimization does not react inside the cache window, fetching fresh data sooner has little value.

For Keywordme, I would usually split cache policy into tiers:

| Query tier | Typical use | Cache approach |

|---|---|---|

| High-spend commercial terms | Bid decisions, landing page checks | Short TTL, higher crawl priority |

| Mid-tier terms | Trend monitoring, ad coverage review | Moderate TTL |

| Long-tail and research terms | Discovery, batch analysis | Long TTL, low priority |

Async collection keeps the app stable

Bulk rank collection belongs in background workers. A user should not wait on hundreds of SERP requests just to open a dashboard or trigger a sync.

A cleaner pattern is to enqueue the batch, process it with workers, write normalized results to storage, and let downstream PPC rules read from that stored dataset. That separation also makes audits easier. You can inspect what was requested, what was cached, what failed, and what changed before a bidding or negative keyword rule fired.

Here is the operating model that holds up under real traffic:

| Practice | Why it matters |

|---|---|

| Caching | Reduces duplicate API calls and lowers cost |

| Queue workers | Keeps the application responsive |

| Batched jobs | Prevents quota spikes |

| Priority scheduling | Reserves budget for keywords tied to PPC decisions |

| Request deduplication | Stops duplicate jobs from draining quota |

Good quota management is not just an engineering concern. It determines whether rank data is cheap enough to wire into actual PPC automation. If each fresh position check has a clear downstream use, such as bid restraint on strong organic terms or negative keyword review on low-fit SERPs, the tracking stack stays both useful and affordable.

Integrating Rank Data into PPC Workflows

Rank data becomes valuable when it changes what you do in Google Ads. If all you do is store positions in a dashboard, you've built reporting, not optimization.

The strongest use case is joining organic rank signals with paid search terms. That lets you spot where your ad spend is compensating for weak organic visibility, where you're paying for terms you already cover well, and where competitors own the result page strongly enough that you should be more selective.

Three PPC workflows worth building

Start with these.

Bid support for weak organic coverage

When a search term performs well in paid search but organic rank is poor, that term may justify stronger paid support while SEO catches up.Negative keyword review for low-fit SERPs

If the organic result page is consistently dominated by competitors or mismatched intent, that query may deserve a closer negative keyword review rather than more spend.Landing page alignment checks

If the page ranking organically doesn't match the page you're promoting in ads, you may have a message or intent mismatch.

In this context, location becomes a real factor, not just a reporting dimension.

Location and device are not optional

A verified gap in the market is the connection between location-based organic rank and Google Ads performance. Rank checker APIs often fall short on device-level ranking data, and that matters because desktop and mobile experiences can influence bid strategy and Quality Score decisions, as summarized in this verified analysis from RankActive’s keyword position checker API page.

That point gets overlooked all the time. A keyword can look fine nationally and still underperform in the city where you spend. Or it can rank acceptably on desktop while mobile visibility is poor. If your ad groups are geo-targeted or device-sensitive, rank collection has to mirror that structure.

Organic rank is only useful for PPC if the market, device, and intent match the way you buy traffic.

A practical join model

At minimum, join these fields between your paid and organic datasets:

- Search term or mapped keyword

- Location

- Device

- Landing page

- Organic position

- Paid cost and conversions

From there, you can build decision rules such as:

- spend more carefully where organic coverage is already strong,

- protect terms where paid drives value but organic is weak,

- review negatives where the result page shows poor commercial alignment.

That’s the core promise of rank APIs for PPC teams. Not “SEO visibility” as a vague concept. Better allocation of bids, cleaner negatives, and a tighter connection between what the auction costs and what the organic channel already earns.

Recommended Rank Tracking Architectures

The right architecture depends on how often you need answers and what triggers action. Organizations often don't require a giant platform on day one. They need a setup that is dependable, cheap enough to maintain, and easy to reason about when a number looks wrong.

Daily batch model

This is the most practical default. A scheduled job runs once per day, pulls rankings for a defined keyword set, stores the results, and feeds reporting or downstream PPC logic.

It works best when:

- decisions happen on a daily rhythm,

- the keyword set is stable,

- cost control matters more than minute-by-minute freshness.

The upside is reliability. The downside is that sudden SERP changes won't be visible until the next run.

On-demand checker

This pattern is useful for analysts and account managers who need a quick answer during audits, landing page reviews, or geo checks. A user submits a keyword, location, and device, then the app fetches a live result.

This model is best when speed of insight matters for ad hoc work. It is not ideal as the only architecture because people tend to overuse it, duplicate checks, and create noisy data with no schedule discipline.

A few design rules help:

- Require location and device inputs

- Show the matched URL, not just the rank

- Log every request for later analysis

- Cache recent requests to avoid duplicate spending

Event-triggered checks

This is the advanced option. Instead of checking everything on a timer, the system triggers a rank lookup when another signal changes. That might be a cost spike, conversion drop, impression loss, or sudden search term growth in paid search.

This pattern fits teams that already have mature monitoring around campaigns. It can be efficient because rank checks happen when something changes. It can also become messy if the trigger rules are vague or too sensitive.

Trigger rank checks from meaningful business signals, not from curiosity.

Choosing the right pattern

A simple decision table usually settles it:

| Architecture | Best for | Main trade-off |

|---|---|---|

| Daily batch | Stable reporting and recurring optimization | Less immediate |

| On-demand | Research and spot checks | Easy to overuse |

| Event-triggered | Advanced automation tied to performance changes | More engineering complexity |

For most PPC teams, the winning sequence is straightforward. Start with daily batch collection. Add an on-demand tool for analysts. Move to event-triggered checks only after your paid data and rank data are already clean, trustworthy, and joined in one place.

If you want to turn search term cleanup, match type handling, negative keyword building, and campaign expansion into a faster workflow, Keywordme is built for exactly that kind of PPC operations work. It helps teams move from messy manual handling to a cleaner optimization process without juggling disconnected tools.

.svg)