A Guide to Landing Page AB Testing

A Guide to Landing Page AB Testing

You're pumping money into ads with tools like Keywordme to get people to your site. Fantastic. But what happens once they land? That's where the real work begins.

Landing page A/B testing isn't just a buzzword; it's how you figure out what actually works. You're simply showing two versions of a page to different groups of visitors to see which one gets more people to click, buy, or sign up. It’s the single most powerful way to make sure your ad budget isn't just an expense, but a serious investment.

Why Landing Page Testing Is Your Biggest Untapped Opportunity

Look, getting traffic is only half the story. The real win is getting those visitors to do something—fill out a form, make a purchase, book a demo. Too many marketers get tunnel-vision on acquisition and completely neglect the on-page experience.

This creates a massive "leaky bucket" problem. You're spending a ton to fill the bucket with visitors, but they're slipping right through the cracks because the page itself isn't doing its job. Think of it like a beautiful storefront display that leads into a messy, confusing store. People might look, but they won't buy. That’s what happens when you skip A/B testing.

The Stark Reality of Untested Pages

The data here is pretty eye-opening. The average landing page converts at a dismal 2.35%. But the top-tier pages? They're pulling in conversion rates of 11.45% or even higher. That’s a massive gap—the kind that separates struggling campaigns from wildly profitable ones.

What’s wild is how few marketers are actually doing anything about it.

Despite its proven impact, a surprisingly low 17% of marketers are consistently using A/B testing to improve their landing pages. This is a golden opportunity for anyone willing to put in the work and adopt a structured testing mindset.

We've seen it time and again: companies that commit to testing can boost their sales by an average of 49%. They turn mediocre pages into genuine revenue machines. This isn't just a marketing "tactic"; it's a fundamental part of any serious PPC strategy.

To put this into perspective, let's look at the numbers.

A/B Testing Adoption vs. Impact at a Glance

The takeaway is clear: the gap between the average and the great is bridged by data-driven testing.

Turning Ad Spend into a True Investment

Every single dollar you spend on ads drives a potential customer to your landing page. If you're not testing, you're basically crossing your fingers and hoping your first guess was the right one. A structured testing process flips that script, replacing guesswork with hard data.

Here's what it unlocks:

- You find the friction. Pinpoint exactly what’s causing visitors to hesitate or leave.

- You understand your audience. Discover which headlines, images, and offers truly connect with them.

- You maximize your ROI. Even a small lift in conversions makes every ad dollar you spend work that much harder.

Ultimately, effective landing page testing is a critical piece of the larger puzzle of website conversion rate optimization. By constantly iterating, you build a powerful conversion engine that works for you around the clock. If you're ready to dive deeper, our guide on conversion rate optimization best practices is a great place to start.

Building Your Pre-Test Game Plan

Before you start tweaking button colors or frantically rewriting headlines, you need a solid game plan. A successful landing page A/B test isn’t about throwing spaghetti at the wall to see what sticks; it’s a disciplined process that starts long before you ever hit "launch." Think of it as your pre-flight checklist.

Without this prep work, you're just guessing. You might get lucky once in a while, but you’ll never build a repeatable system for growth. The whole point is to shift from "I think this will work" to "I have the data to prove this works."

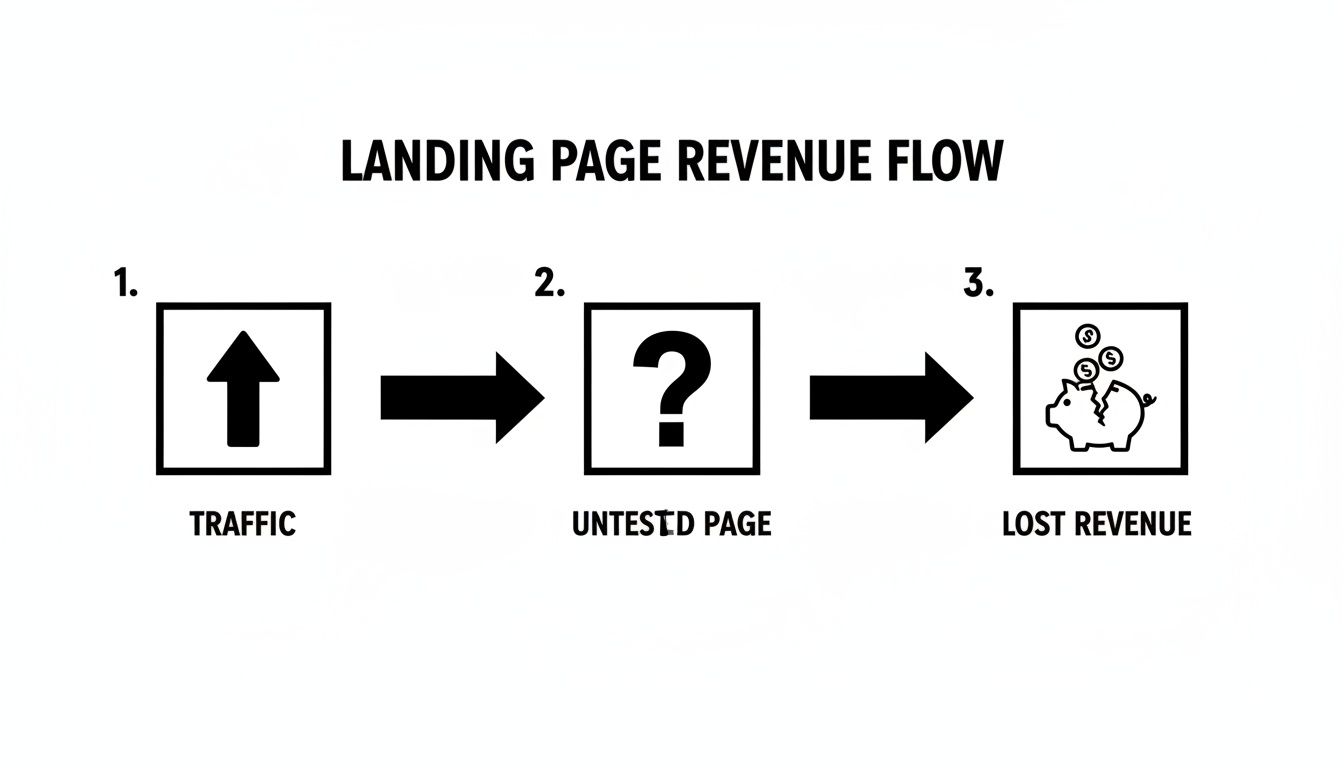

This simple diagram shows exactly what happens when you skip this step: valuable traffic hits an unoptimized page, and potential revenue just leaks away.

It’s a stark visual, right? Not testing is like having a hole in your pocket. You’re letting customers and cash slip through your fingers without even knowing why.

Craft a Testable Hypothesis

Every worthwhile experiment I’ve ever run started with a strong hypothesis. This isn't just a shot in the dark. It's a clear, testable statement that lays out exactly what you’re changing, who you're changing it for, and what you expect to happen.

A weak hypothesis sounds something like, "Making the CTA button bigger will get more clicks." It’s vague and, frankly, lazy. It doesn't get to the why.

Now, a strong hypothesis is different. It’s specific and strategic: "By changing the CTA button text from 'Submit' to 'Get My Free Audit,' we will increase form submissions by 15% because the new copy is more specific, value-driven, and directly reflects what the user actually wants."

See the difference? A solid hypothesis always has three parts:

- The Change: Changing the CTA button copy.

- The Expected Outcome: A 15% lift in form submissions.

- The Rationale (The "Because"): The new copy is more compelling and aligned with the user's goal.

This structure forces you to think critically about why a change might work, which is how you get smarter with every test you run.

Define Your Key Metrics

"More conversions" is a fine goal, but it's not a metric. You need to get specific about the Key Performance Indicators (KPIs) that truly matter to your business. Your primary metric, above all else, must tie directly back to your hypothesis.

Your primary KPI is the one metric that will determine if the test is a win or a loss. Everything else is just noise. For a lead-gen page, that’s almost always form submissions. For an e-commerce page, it might be 'add to cart' events or, even better, completed purchases.

But don't stop there. You also need to keep an eye on secondary or "guardrail" metrics. These are crucial because they stop you from accidentally breaking something else while trying to fix one thing. A win isn't a win if it tanks another important metric.

Common Secondary Metrics to Monitor:

- Bounce Rate: Did your brilliant new design actually make more people leave right away?

- Time on Page: Are people more engaged with the new version, or less?

- Cost Per Acquisition (CPA): If your conversion rate goes up but the lead quality plummets, your CPA might actually get worse. Ouch.

- Average Order Value (AOV): For e-commerce, did a change that boosted 'add to cart' clicks also lead to smaller orders?

Thinking through these metrics beforehand gives you the full story of your test's impact. It’s what prevents you from celebrating a false victory based on a single, misleading number. This is the bedrock of any serious optimization strategy.

How to Design Test Variants That Actually Win

Alright, you’ve got a solid hypothesis. Now comes the creative part: building your challenger, Version B. This is where your theory gets put to the test, and the key is to be strategic, not just different.

The single biggest mistake I see people make is changing way too much at once. They'll tweak the headline, swap the hero image, rewrite the CTA, and change the form layout. Sure, they might get a lift, but they'll have absolutely no clue why. It's a hollow victory because you can't replicate it.

Instead, let your hypothesis guide you to a single, meaningful change. This is how you get clean data and real insights from your landing page A/B testing.

Zero In on High-Impact Elements

Let's be honest, not all page elements are created equal. Some pack a much bigger punch than others. If you want to see a real impact, you need to focus on the parts of the page that most influence a visitor's psychology and decision-making.

Here's my shortlist of where to start:

- The Headline: It’s your handshake, your first impression. Try pitting a benefit-driven headline against one that pokes at a pain point. For example, "Our SEO Services" is bland. "Stop Losing Customers to Your Competitors" is a hook.

- The Call-to-Action (CTA): This is the finish line. The CTA button is your last chance. Experiment with the text ("Get Started" vs. "Claim My Free Trial"), the color (something that pops off the page usually works best), and where you put it (above the fold is a classic for a reason).

- Hero Image/Video: Your main visual sets the entire mood. Test an image of your product in action against a photo of a smiling customer. Or, see if a short, punchy explainer video can outperform a static image.

- Social Proof: How you show you're trustworthy is huge. Try testing specific customer testimonials against a row of impressive client logos. You could even A/B test a prominent "star rating" against a powerful, direct quote.

The goal is to make a change that’s actually significant enough for someone to notice and react to. Fiddling with two slightly different shades of blue probably isn't going to move the needle. But changing your CTA from a passive "Submit" to an urgent "Get Your Free Quote Now"? That’s a change rooted in real user motivation.

Choosing the Right Tools for the Job

Good news: you don't need to be a developer to run a powerful landing page A/B test. Modern tools have made the technical side incredibly simple, with most offering visual editors that let you point, click, and change things without ever looking at code.

Here’s a quick breakdown of your options:

Most of these tools work the same way: you add a small snippet of code to your website. After that, you're free to use their editor to create your variant, set your goals, and hit "launch." They handle all the heavy lifting of splitting traffic and tracking results, so you can focus on the strategy.

Running the Test and Understanding the Data

Alright, you've pushed the button and your test is live. High-five! Now for the truly hard part: sitting on your hands and waiting.

I know the temptation to refresh your results every five minutes is real. We've all been there. But peeking too early is a classic rookie mistake that leads to gut decisions based on half-baked data. You have to let the test run its course to get a clear, reliable picture. This isn't about getting a fast answer; it's about getting the right one.

The hard truth is that most tests don't deliver those jaw-dropping wins you read about in case studies. In reality, only about 1 in 8 tests (12%) actually produces a statistically significant improvement. This is exactly why having a structured process and a bit of patience is so important.

As a rule of thumb, I don’t even start looking at the data seriously until each variant has seen at least 1,000 unique visitors and hit 100 conversions. Anything less is just noise.

What Does "Statistical Significance" Actually Mean?

So, how do you know when the results are solid? The term everyone throws around is statistical significance. It sounds complicated, but it's really just a measure of how confident you can be that your results aren't a random fluke.

Most testing tools shoot for a 95% confidence level. All this means is that there's a 95% chance the difference you're seeing between your original page and your new version is a real thing, not just random user behavior. It's the mathematical proof you need before you go making big changes.

Think of it like a political poll. You wouldn't trust a poll that only surveyed ten people, right? The same logic applies here. You need enough data to have real confidence in the outcome. Let the numbers build before you jump to conclusions.

Digging Deeper: When the Results Aren't Obvious

Sometimes a test ends and the overall numbers are... flat. No clear winner, no obvious loser. Don't write it off as a failure! This is your cue to start digging deeper by segmenting your results.

Slicing the data can reveal some fascinating hidden patterns. Here are a few segments I always check:

- By Traffic Source: Did your variant resonate more with people from Google Ads versus organic search? This can tell you a ton about user intent.

- By Device: Maybe that new layout is a killer on desktop but a total dud on mobile. That’s a critical insight.

- By New vs. Returning Visitors: A bold new headline might be great for grabbing first-timers but could fall flat with people who already know your brand.

This table is a quick guide I use to make sense of what the numbers are telling me and decide what to do next.

Interpreting Your A/B Test Outcomes

At the end of the day, every test is a learning opportunity, win or lose.

For this kind of deep-dive analysis, a proper GA4 integration is non-negotiable. It’s the only way to reliably track events, conversions, and the entire user journey across your test variants. The goal isn't just to find a winning page; it's to learn something valuable from every single experiment you run.

How to Scale Your Wins and Build an Optimization Culture

Finding a winning variant in your landing page AB testing isn’t the finish line. Honestly, it’s just the starting pistol for the next race. The real magic happens when you turn that one successful test into a repeatable, long-term engine for growth. This is how you go from just running experiments to actually building a culture of optimization.

Once you've confirmed a winner with solid statistical confidence, the first move is obvious but you'd be surprised how often it gets delayed: implement it for 100% of your traffic. Don't let your awesome new variant just sit in the testing tool. Push it live! Get it working for your business right away and start banking that lift in conversions you worked so hard for.

Document Everything You Learn

Rolling out the winner is only half the battle. The most valuable thing you get from any test—win or lose—is the insight. You need a central place to keep all this knowledge. It doesn't have to be fancy; a simple spreadsheet or a shared document works perfectly.

For every test you run, log these details:

- The Original Hypothesis: What did you think would happen and why?

- Screenshots: A simple visual of the control (A) and the variant (B). It saves a thousand words.

- Key Metrics: Note the final conversion rates, confidence level, and any other secondary metrics that moved.

- The Key Takeaway: Boil it down to one sentence. Something like, "Our audience clicks more on CTAs that promise immediate value ('Get My Audit') instead of generic commands ('Submit')."

This log becomes your team’s collective memory. It keeps you from repeating failed tests and, more importantly, it becomes a goldmine for smarter ideas. This is how you create a feedback loop where one test’s results directly inspire the next hypothesis.

The goal here is to get away from one-off, isolated tests and build a structured, ongoing program. An optimization culture sees every landing page not as a finished product, but as a living hypothesis that can always be improved. This mindset is what generates compounding returns on your ad spend.

Create a Testing Roadmap

With a growing library of insights, you can start planning your next moves with a testing roadmap. This isn't just a random list of ideas. It's a prioritized plan built on what you've already learned. You start ranking future test ideas based on what your own data is telling you.

For instance, if your last test proved that adding specific customer testimonials boosted trust, maybe the next test is to see if video testimonials work even better. See? You're not just guessing anymore; you're building on proven success.

You can explore a variety of marketing automation best practices to make this process even smoother. When you systematize your approach like this, every click you get from your keyword strategy becomes a valuable data point. This is how landing page A/B testing transforms from a simple tactic into a core driver of your business.

Got Questions About Landing Page Testing? Let's Clear a Few Things Up

Alright, so we've covered the playbook. But if you're like most people dipping their toes into A/B testing, a few questions are probably rattling around in your head. That's perfectly normal. Let's tackle some of the most common ones I hear.

How Long Do I Need to Run This Thing?

This is probably the number one question, and the honest answer is: it depends. The goal isn't to hit a certain number of days; it's to reach statistical significance. Think of this as the point where you're confident the results aren't just a fluke. Most pros aim for a 95% confidence level.

As a practical starting point, I always recommend running a test for at least two full business weeks. This helps iron out the weird peaks and valleys you see on weekends versus weekdays. If your page doesn't get a ton of traffic, you'll simply need to be more patient and let it run longer to collect enough data.

Can I Test More Than Just an 'A' and a 'B'?

You bet. Testing multiple variations at once is called an A/B/n test, and it can be a great way to throw a few different ideas against the wall to see what sticks.

The only real downside? It’s a numbers game. If you have your original page (the control) plus two new challengers, you're now splitting your traffic three ways. That means each version gets a smaller piece of the pie, and it will take longer to get a clear, statistically significant winner. If you're working with limited traffic, my advice is to keep it simple with a straightforward A vs. B test.

Look, the point here isn't just about finding a "winner." It's about learning something new about your audience with every single experiment. Even a test that bombs teaches you what doesn't resonate, and that insight is pure gold.

What if My Test Is a Total Wash?

It happens. A lot, actually. You run a test for weeks, and the results come back flat. Neither version really beat the other. When this happens, it usually points to one of two things:

- Your change was too timid. Swapping out one shade of blue for a slightly different one is rarely going to rock the boat.

- Your hypothesis missed the mark. You thought the headline was the problem, but maybe your visitors don't even care about the headline. The real friction point could be somewhere else entirely.

Don't chalk it up as a failure. An inconclusive test is just feedback telling you to think bigger. Go back to your research, form a bolder hypothesis, and try something more dramatic.

Ready to stop guessing and start growing? Keywordme helps you get the right traffic to your landing pages, so you have quality visitors for every test. Optimize your Google Ads campaigns in a fraction of the time and turn your ad spend into predictable revenue. Start your free 7-day trial of Keywordme today.

.svg)